Artificial intelligence is no longer confined to the cloud. As industries connect more sensors, cameras, and machines, intelligence is moving to the edge — where data is created and decisions must be made instantly. Edge AI enables this transformation by bringing real-time analytics, pattern recognition, and automation directly into local devices.

In this edition of the Apacer Intelligence in Motion Series, Apacer CEO Gibson Chen and Advantech Director of Emerging Business Offices Hank Lee share their viewpoints on how Edge AI is reshaping industrial infrastructure — and why reliability, data integrity, and sustainability now sit at the heart of intelligent design.

“At the edge, reliability isn’t a feature — it’s survival. Storage is the quiet engine that keeps intelligent systems thinking — efficiently, securely, and sustainably.”

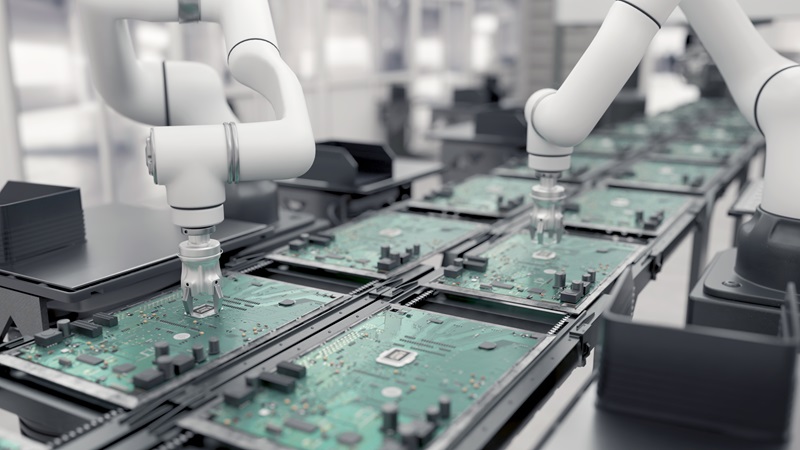

— Gibson Chen, President of ApacerAcross industries, computing power is migrating from the cloud to the edge, where AI models analyze and act on data in real time. Smart factories detect anomalies in milliseconds; transportation systems interpret sensor data to improve safety; healthcare devices perform diagnostics locally to preserve privacy. These are the realities of Edge AI — and they redefine what storage must do.

From Apacer’s field experience, edge devices operate under relentless workloads, often within compact, fanless enclosures exposed to dust, vibration, and temperature swings. Eliminating moving parts improves reliability, yet it also means storage must shoulder greater responsibility for overall system stability.

In smart factories, Edge AI exposes a hard truth: automation will never be more reliable than the weakest layer of its infrastructure.

While AI models and controllers often receive the spotlight, operational resilience is increasingly determined by how data is stored, protected, and sustained at the edge. When storage fails to maintain consistency under power stress, thermal constraints, or nonstop write cycles, intelligence degrades quietly—often long before systems visibly fail or alarms are triggered. This is why, in modern factories, storage should be viewed not as a capacity decision, but as a reliability strategy.

To support AI inference and local data analytics, storage at the edge must be designed to deliver:

At the edge, reliability becomes a foundational requirement rather than an optional feature. As storage moves closer to where AI inference and decision-making occur, it must transition from a passive component into a core enabler of system continuity and trust.

Industry research reinforces this shift. IDC projects global edge-computing spending to reach approximately US$260 billion by 2025, while Gartner notes that most enterprise data is increasingly created and processed outside centralized data centers. Together, these trends point to a clear reality: the edge is already intelligent, and long-term reliability is what sustains that intelligence in real-world operations.

“At scale, the real challenge of Edge AI is not model sophistication, but building systems — supported by open ecosystem thinking — that can operate reliably across diverse, real-world environments.”

— Hank Lee, Director of Emerging Business Offices, AdvantechWhy Edge AI Struggles to Scale Beyond Pilot Deployments

The biggest challenge enterprises will face when scaling Edge AI over the next two to three years will not be the AI models themselves, but the friction created by infrastructure fragmentation at scale. Many enterprises successfully complete proof-of-concept (PoC) deployments in controlled lab environments, yet struggle when attempting to replicate those results across dozens of factory sites. At that point, they encounter a complex mix of legacy equipment, diverse communication protocols, inconsistent connectivity, and varying hardware architectures. This challenge is often referred to as the “pilot loop.”

As computing power continues shifting from centralized cloud environments toward the edge, managing this level of heterogeneity manually becomes increasingly impractical. The core challenge, therefore, is moving away from bespoke, one-off projects toward standardized, repeatable infrastructure models that can scale reliably across sites. From an architectural perspective, software-defined approaches at the edge increasingly play a role in abstracting hardware differences and unifying data handling. At the same time, such approaches depend on foundational hardware components capable of operating consistently in harsh industrial environments across thousands of deployments. Without stable physical foundations, efforts to scale software platforms risk amplifying operational complexity rather than reducing it.

What It Takes to Build Flexible, Scalable Edge Intelligence

As these systems scale, flexibility becomes essential. Edge AI workloads evolve rapidly in response to changing business conditions, and sustaining this pace requires decoupling software innovation from hardware dependencies so systems can adapt without being constrained by fixed infrastructure. This shift has driven broader industry discussion around more open, ecosystem-based approaches to edge deployment.

To stay up to date, enterprises need two things. First, they require a robust Over-the-Air (OTA) mechanism that can update containerized AI models seamlessly without risking device failure. This enables developers to deploy new logic consistently across large numbers of distributed edge devices, supporting continuous improvement at scale.

Second, enterprises need hardware capable of handling this intense pace of change. Frequent model retraining and constant inferencing at the edge place significant strain on system resources. High-performance computing is ineffective if storage bottlenecks slow data ingestion or model loading. Maintaining flexibility therefore depends on industrial-grade memory and storage with high endurance and sustained performance, able to support the rapid iteration cycles of modern AI workloads.

Why Edge AI Requires an Ecosystem, Not Isolated Technologies

Edge AI is reshaping how industries think, build, and operate — decentralizing intelligence and redefining performance at every layer of infrastructure.

As intelligence moves closer to where data is created, every component in the ecosystem must evolve. Processors must support distributed workloads, networks must deliver low-latency communication, and storage must safeguard data to enable real-time decision-making.

To truly enable intelligence at the edge, technology design must be rethought as a system rather than in isolation. Only through coordinated evolution across hardware and software can industries build edge infrastructures that are not only intelligent, but also sustainable and trustworthy. This collaboration — spanning component design, system architecture, and operational deployment — is what transforms the edge from a data endpoint into a platform for continuous learning and innovation.

|

|

Apacer and Advantech share a common vision: to support this transformation through reliable, scalable technologies that bring intelligence closer to where data truly matters. If reliable storage is the engine of survival, an intelligent platform is the navigation system. The real challenge of Edge AI isn't building the model; it's orchestrating it across thousands of devices without breaking the operation.

Stay tuned for the next Intelligence in Motion edition, where we’ll continue to explore how emerging technologies are driving the evolution of intelligent industries.